In large music catalogs, artist ambiguities such as different artists sharing the same name or one artist with several distinct names are commonplace. Metadata may not always be sufficient to resolve these ambiguities, especially for small artists with few of them.

In this paper we propose to use metric learning in order to leverage audio as source of information to tackle music artists disambiguation.

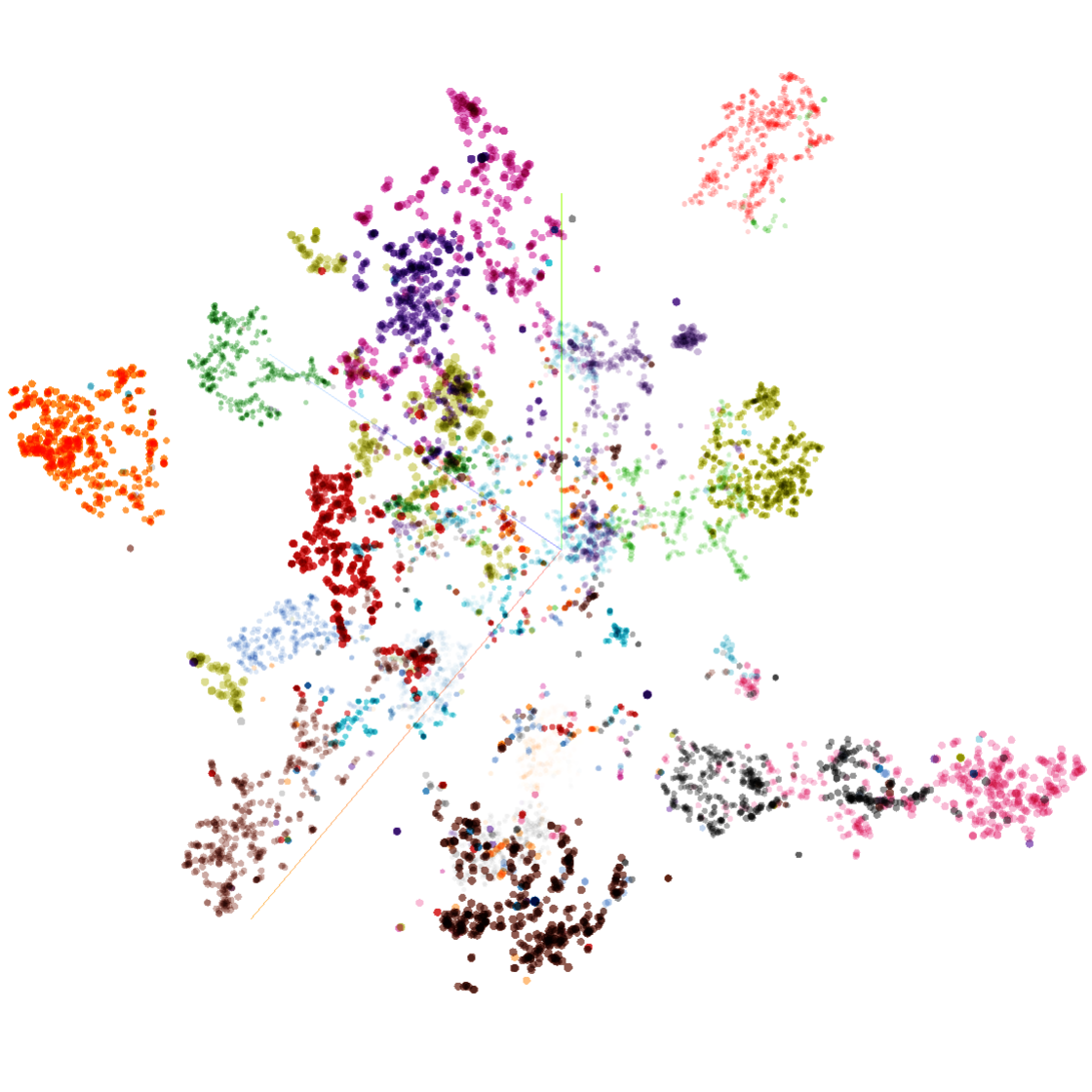

We evaluate the use of embeddings learned from audio in artist classification task on a common benchmark dataset, and we address a clustering task in a homonym artists dataset, showing that our system has the ability to discriminate unknown artists and making it usable for disambiguation in large scale catalogs with addition of new artists.

This paper has been published in the proceedings of the 19th International Society for Music Information Retrieval Conference (ISMIR 2018).