Extensive works have tackled Language Identification (LID) in the speech domain, however their application to

the singing voice trails and performances on Singing Language Identification (SLID) can be improved leveraging recent progresses made in other singing related tasks.

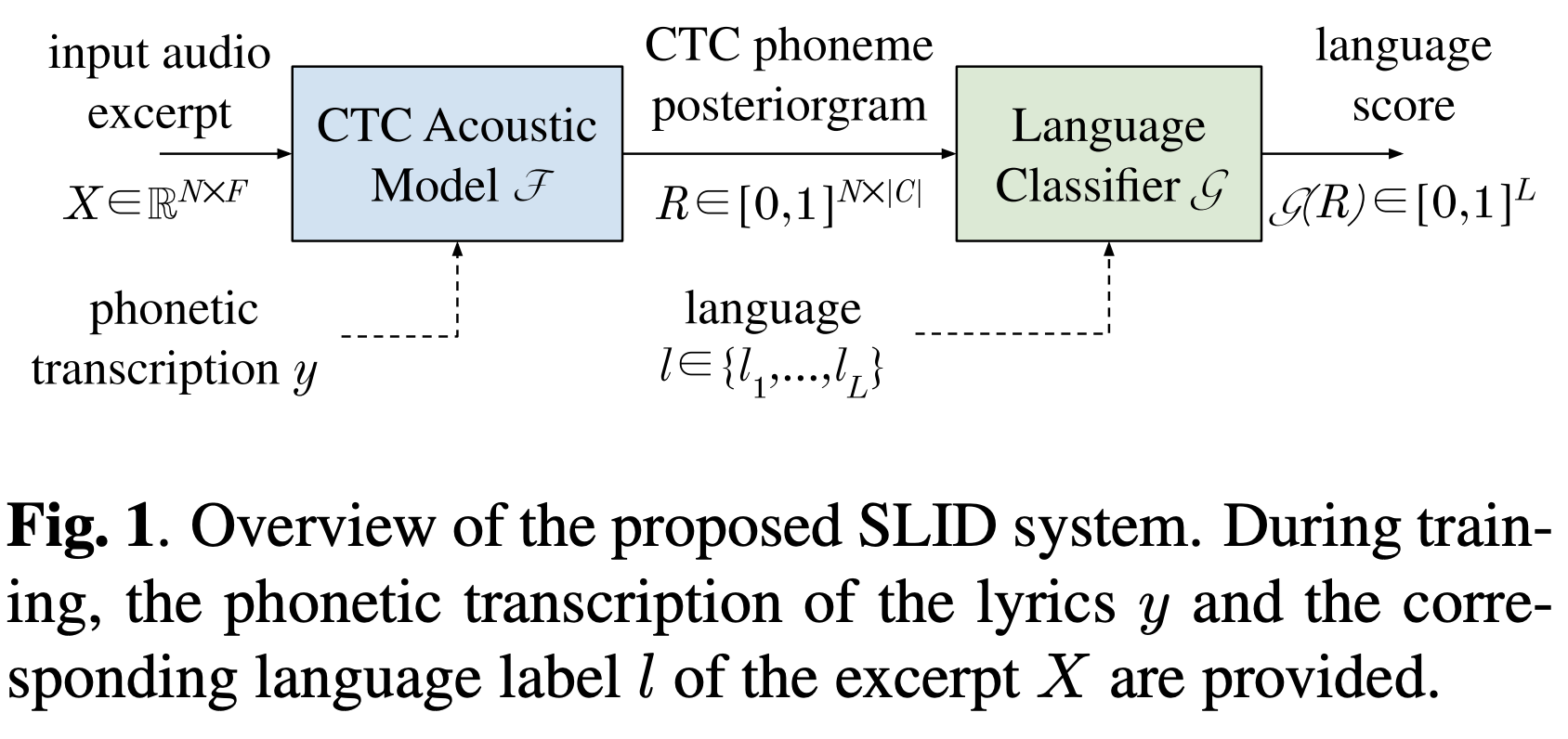

This work presents a modernized phonotactic system for SLID on polyphonic music: phoneme recognition is performed with

a Connectionist Temporal Classification (CTC)-based acoustic model trained with multilingual data, before language

classification with a recurrent model based on the phonemes estimation. The full pipeline is trained and evaluated with a

large and publicly available dataset, with unprecedented performances. First results of SLID with out-of-set languages

are also presented.

This paper was published in the proceedings of the 46th IEEE International

Conference on Acoustics, Speech and Signal Processing (ICASSP 2021).

It results from Lenny Renault’s research internship at Deezer in 2020.