As music has become more available especially on music streaming platforms, people have started to have distinct preferences to fit to their varying listening situations, also known as context. Hence, there has been a growing interest in considering the user’s situation when recommending music to users. Previous works have proposed user-aware autotaggers to infer situation-related tags from music content and user’s global listening preferences. However, in a practical music retrieval system, the autotagger could be only used by assuming that the context class is explicitly provided by the user.

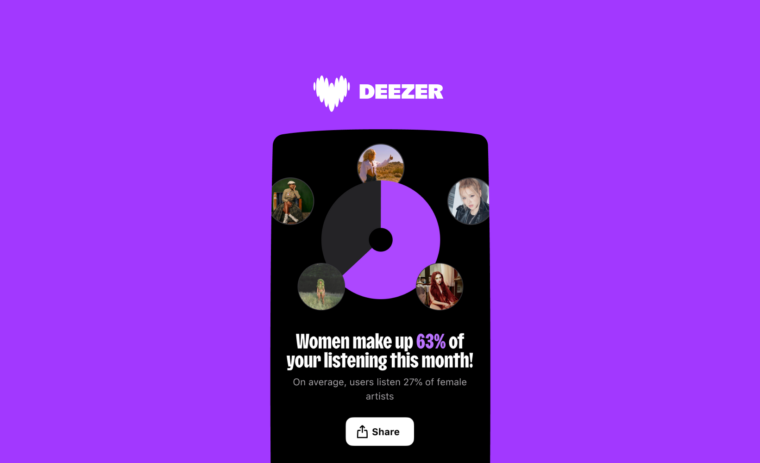

In this work, for designing a fully automatised music retrieval system, we propose to disambiguate the user’s listening information from their stream data. Namely, we propose a system which can generate a situational playlist for a user at a certain time 1) by leveraging user-aware music autotaggers, and 2) by automatically inferring the user’s situation from stream data (e.g. device, network) and user’s general profile information (e.g. age). Experiments show that such a context-aware personalized music retrieval system is feasible, but the performance decreases in the case of new users, new tracks or when the number of context classes increases.

This paper has been published in the proceedings of the 23rd International Society for Music Information Retrieval Conference (ISMIR 2022).